---- Modern Data Engineering · Instructor-Led Workshop

Build Data

Pipelines that Scale

at Speed..

Pipelines that Scale

at Speed..

From ingestion to transformation to orchestration — master dbt, Spark, Snowflake, and Azure Data Factory across three progressive levels. Build production-grade data platforms and land roles that matter.

dbt CertifiedDatabricks AssociateSnowPro Core

3×Workshop Levels

40+Hands-on Labs

2×Microsoft Exams Covered

96%PL-300 Pass Rate

---- Three Levels

Choose Your

Data Engineering Level.

Data Engineering Level.

Level 01 — Beginner

SQL, Python & Data Fundamentals

Start your data engineering journey with the tools every data professional relies on — advanced SQL, Python for data, pandas, and cloud storage fundamentals. Built for career changers and graduates entering the field.

Key Modules

Advanced SQL — window functions, CTEs, performance tuning

Python for data — pandas, polars, and data wrangling

Cloud storage basics — Azure ADLS, S3, GCS fundamentals

Introduction to dbt — models, sources, and testing

Data modelling concepts — star schema and dimensional design

dbt FundamentalsSnowPro Core Prep

BeginnerAll Backgrounds2 DaysLabs Included

GBP

£549

per person

/

INR

₹29K

per person

⭐ Most Popular

Level 02 — Intermediate

Modern Data Stack & Lakehouse

Master the production-grade modern data stack — dbt Cloud, Snowflake, Azure Data Factory, and Delta Lake. Build end-to-end ELT pipelines that are tested, documented, and CI/CD-ready.

Key Modules

dbt Cloud — advanced models, macros, packages, exposures

Snowflake — warehouses, data sharing, performance optimisation

Azure Data Factory — pipelines, triggers, and ADF patterns

Delta Lake — ACID transactions, time travel, and Z-ordering

Pipeline orchestration with Apache Airflow & Dagster

Data quality — Great Expectations, dbt tests, and alerting

dbt Developer CertSnowPro CoreDP-203 Foundation

IntermediateEngineers & Analysts3 DaysLive Labs

GBP

£895

per person

/

INR

₹49K

per person

Level 03 — Advanced

Spark, Streaming & Data Platform Engineering

Go deep on distributed computing, real-time streaming, and enterprise data platform design. Build Databricks-native pipelines with Unity Catalog governance, Kafka streaming architectures, and Data Vault 2.0 modelling.

Key Modules

Apache Spark & PySpark — optimisation, broadcast, partitioning

Databricks — Unity Catalog, Asset Bundles, Workflows

Apache Kafka & Spark Structured Streaming

Data Vault 2.0 — hubs, links, satellites, and business vault

Platform engineering — IaC, Terraform, CI/CD for data

Data mesh principles and federated governance at scale

Databricks Associate DEDP-203

AdvancedSenior Engineers3 DaysEnterprise Labs

GBP

£1,195

per person

/

INR

₹69K

per person

Level 01 — Beginner

SQL, Python & Data Fundamentals

Start your data engineering journey with the tools every data professional relies on — advanced SQL, Python for data, pandas, and cloud storage fundamentals. Built for career changers and graduates entering the field.

Key Modules

Advanced SQL — window functions, CTEs, performance tuning

Python for data — pandas, polars, and data wrangling

Cloud storage basics — Azure ADLS, S3, GCS fundamentals

Introduction to dbt — models, sources, and testing

Data modelling concepts — star schema and dimensional design

dbt FundamentalsSnowPro Core Prep

BeginnerAll Backgrounds2 DaysLabs Included

GBP

£549

per person

/

INR

₹29K

per person

⭐ Most Popular

Level 02 — Intermediate

Modern Data Stack & Lakehouse

Master the production-grade modern data stack — dbt Cloud, Snowflake, Azure Data Factory, and Delta Lake. Build end-to-end ELT pipelines that are tested, documented, and CI/CD-ready.

Key Modules

dbt Cloud — advanced models, macros, packages, exposures

Snowflake — warehouses, data sharing, performance optimisation

Azure Data Factory — pipelines, triggers, and ADF patterns

Delta Lake — ACID transactions, time travel, and Z-ordering

Pipeline orchestration with Apache Airflow & Dagster

Data quality — Great Expectations, dbt tests, and alerting

dbt Developer CertSnowPro CoreDP-203 Foundation

IntermediateEngineers & Analysts3 DaysLive Labs

GBP

£895

per person

/

INR

₹49K

per person

Level 03 — Advanced

Spark, Streaming & Data Platform Engineering

Go deep on distributed computing, real-time streaming, and enterprise data platform design. Build Databricks-native pipelines with Unity Catalog governance, Kafka streaming architectures, and Data Vault 2.0 modelling.

Key Modules

Apache Spark & PySpark — optimisation, broadcast, partitioning

Databricks — Unity Catalog, Asset Bundles, Workflows

Apache Kafka & Spark Structured Streaming

Data Vault 2.0 — hubs, links, satellites, and business vault

Platform engineering — IaC, Terraform, CI/CD for data

Data mesh principles and federated governance at scale

Databricks Associate DEDP-203

AdvancedSenior Engineers3 DaysEnterprise Labs

GBP

£1,195

per person

/

INR

₹69K

per person

---- What You'll Learn

Deep-Dive

Curriculum Topics.

Curriculum Topics.

Every workshop is built around real production scenarios. No hello-world pipelines — every lab uses realistic datasets and enterprise-grade tooling.

SQL & Python Foundations

📋 All Levels — Beginner Track

🗃️

Advanced SQL — window functions, LAG/LEAD, recursive CTEs, explain plans

🐍

Python for data — pandas, polars, and efficient data wrangling at scale

☁️

Cloud storage patterns — ADLS Gen2, S3, partitioning and file formats

📐

Data modelling fundamentals — star schema, snowflake schema, SCD types

🔧

Introduction to dbt — sources, models, tests, and documentation

⚙️

Version control for data — Git workflows, branching, and PR reviews

2 Days

Lab: Snowflake Free Trial

Beginner-friendly

dbt & Modern Transformation

📋 dbt Developer Certification

🔧

dbt advanced — macros, Jinja templating, packages, and custom generic tests

📸

Snapshots & SCD Type 2 — tracking slowly changing dimensions with dbt

🔗

Exposures, metrics, and the semantic layer for self-serve analytics

🚦

dbt CI/CD — GitHub Actions, slim CI, and blue/green deployments

📊

dbt Cloud IDE vs CLI — environments, job scheduling, and alerting

🧪

dbt testing strategies — schema tests, data tests, singular tests

Full Day

Lab: dbt Cloud

Intermediate

Snowflake & Cloud Data Warehouse

📋 SnowPro Core

❄️

Snowflake architecture — virtual warehouses, micro-partitioning, and clustering

🔄

ELT patterns — Snowpipe, Streams, and Tasks for continuous data loading

🤝

Data sharing — secure shares, data exchange, and reader accounts

🐍

Snowpark for Python — pushing Python code into Snowflake for scalability

💰

Cost optimisation — result caching, auto-suspend, and credit monitoring

🔐

Security — role-based access, column masking, and row-level security

Full Day

Lab: Snowflake Trial

SnowPro Prep

Apache Spark & Databricks

📋 Databricks DE Associate

⚡

Spark architecture — driver, executors, DAGs, stages, and the Spark UI

🔀

PySpark optimisation — broadcast joins, partition tuning, and caching strategy

🧱

Delta Lake — ACID transactions, schema enforcement, and time travel

🏗️

Databricks Workflows — task orchestration, job clusters, and notebooks

📋

Unity Catalog — data governance, fine-grained access, and lineage

💡

Delta Live Tables — declarative pipeline authoring with DLT expectations

Full Day

Lab: Databricks Community

Advanced

Kafka & Real-Time Streaming

📋 Advanced Track

📡

Kafka architecture — brokers, topics, partitions, consumer groups, and offsets

🔌

Kafka Connect — source and sink connectors for zero-code integration

📋

Schema Registry — Avro, Protobuf, and schema evolution patterns

🌊

Spark Structured Streaming — watermarking, windowing, and late data handling

🔍

Exactly-once semantics — idempotent producers and transactional consumers

🏭

Production patterns — dead letter queues, compaction, and Kafka Streams

Full Day

Lab: Confluent Cloud

Advanced

DataOps & Governance

📋 Enterprise Track

🛡️

Data quality at scale — Great Expectations, dbt tests, and Monte Carlo observability

🏗️

Infrastructure as Code — Terraform for Snowflake, Databricks, and ADF

🔄

DataOps CI/CD — automated testing, deployment pipelines, and rollback strategies

🕸️

Data mesh architecture — domain ownership, data products, and federated governance

📖

Data cataloguing — Azure Purview, Atlan, and data lineage visualisation

🔐

Compliance & security — GDPR in data platforms, masking, and audit trails

Full Day

Enterprise Labs

Advanced

---- Tech Stack

Tools You'll

Master in Every Lab.

Master in Every Lab.

Every session uses the real tools used by data teams at scale. No toy datasets. No simulators.

🔧

dbt Core & Cloud

Build modular, tested, documented SQL transformation layers with dbt. Covers models, macros, seeds, sources, snapshots, exposures, and CI/CD with GitHub Actions.

All levels

❄️

Snowflake Data Cloud

Virtual warehouses, result caching, zero-copy cloning, dynamic tables, and Snowpark for Python. Build performant, cost-efficient analytical workloads on Snowflake.

Intermediate+

⚡

Apache Spark & PySpark

Distributed data processing at petabyte scale. Covers DataFrames, RDDs, Structured Streaming, broadcast joins, shuffle partitioning, and Spark UI debugging.

Advanced track

🧱

Databricks & Delta Lake

The unified data intelligence platform. Unity Catalog governance, Workflows, Asset Bundles, Delta Live Tables, and ACID transactions with time travel on Delta Lake.

Intermediate+

🏭

Azure Data Factory

Cloud-native ETL/ELT orchestration on Azure. Copy activity, data flows, triggers, linked services, and integration patterns with ADLS Gen2 and Synapse Analytics.

All levels

📡

Apache Kafka & Flink

Event-driven data pipelines and real-time stream processing. Kafka Connect, Schema Registry, consumer groups, and Flink for stateful streaming transformations.

Advanced track

🌬️

Apache Airflow & Dagster

Pipeline orchestration and observability. Airflow DAGs, operators, sensors, XComs, and Dagster assets for modern declarative data pipeline management.

Intermediate+

🛡️

Data Quality & Observability

Great Expectations, dbt tests, Monte Carlo, and custom anomaly detection. Catch data issues before they reach dashboards and automate quality gates in CI/CD.

All levels

---- Why Data Engineering

Why Learn

Data Engineering Now?

Data Engineering Now?

01

The AI Revolution Runs on Data Pipelines

Every AI model in production needs reliable, clean, and well-governed data. As AI adoption explodes, demand for the engineers who build the pipelines feeding these models has never been higher. Data Engineering is the unsexy foundation of every AI headline.

02

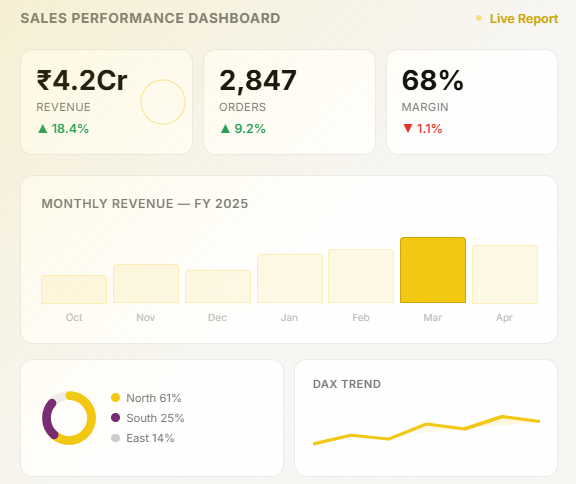

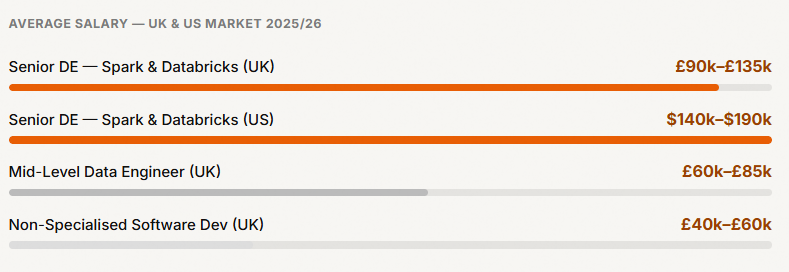

Senior DE Roles Command £90k–£150k

Senior Data Engineers with Spark, dbt, and Snowflake skills regularly clear £90k–£130k in London, and $130k–$180k in the US. The combination of cloud + modern stack is the premium skill bracket in the data market right now.

03

The Modern Data Stack Replaced Legacy ETL

SSIS, Informatica, and hand-rolled Python ETL are being replaced. dbt, Snowflake, Databricks, and Airflow are now the standard. Engineers who can operate the modern stack are in demand at both startups and enterprise scale.

78%

of Fortune 500 Fortune 500 companies have adopted or are actively migrating to a modern data stack — creating tens of thousands of open roles globally that outpace supply.

78%

of Fortune 500 Fortune 500 companies have adopted or are actively migrating to a modern data stack — creating tens of thousands of open roles globally that outpace supply.

04

Data Mesh & Governance Are Now Required Skills

As organisations scale data platforms, federated ownership, data products, and Unity Catalog-style governance are becoming core competencies, not nice-to-haves. This workshop covers the architectural thinking, not just the tooling.

--- Learner Stories

What Our

Graduates Say.

Graduates Say.

“

The dbt + Snowflake lab on day two completely changed how I think about transformation layers. I went back to work and refactored our entire SQL codebase into dbt models with proper tests within two weeks. Night and day improvement.

AS

Arjun S.

Data Engineer · HSBC, London

“

I passed the Databricks Data Engineer Associate exam one month after the advanced workshop. The instructor had clearly sat the exam multiple times and knew exactly where people lose marks. The Spark optimisation section alone was worth the course fee.

PK

Priya K.

Senior Data Engineer · Deloitte, Pune

“

We trained 22 analysts and junior engineers as a corporate batch. Within a quarter we had deprecated our legacy Informatica pipelines and moved everything to dbt + ADF. The team now ships pipeline changes in hours, not weeks. Transformative investment.

MW

Michael W.

Head of Data · Lloyds Banking Group